Stressed out? Need to talk? Turning to a chatbot for emotional support might help.

ComArtSci Associate Professor of Communication Jingbo Meng wanted to see just how effective artificial intelligence (AI) chatbots could be in delivering supportive messages. So she set up the research and used a chatbot development platform to test it out.

“Chatbots have been widely applied in customer service through text- or voice-based communication,” she said. “It’s a natural extension to think about how AI chatbots can play a role in providing empathy after listening to someone’s stories and concerns.”

Chatting it up

In early 2019, Meng began assessing the effectiveness of empathic chatbots by comparing them with human chat. She had been following the growth of digital healthcare and wellness apps, and saw the phenomenal growth of those related to mental health. Her previous collaboration with MSU engineering colleagues focused on a wearable mobile system to sense, track, and query users on behavioral markers of stress and depression. The collaboration inspired her to use chatbots that initiate conversations with users when the behavioral markers are identified.

“We sensed that some chatbot communications might work, others might not,” Meng said. “I wanted to do more research to understand why so we can develop more effective messages to use within mental health apps.”

Meng recruited 278 MSU undergraduates for her study, and asked them to identify major stressors they had experienced in the past month. Participants were then connected through Facebook Messenger with an empathetic chat partner. One group was told they would be talking with a chatbot, another understood they would be talking with a human. The wrinkle? Meng set it up so only chatbots delivered queries and messages, allowing her to measure if participants reacted differently when they thought their chat partner was human.

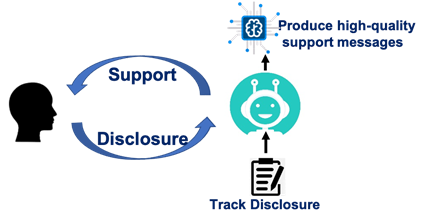

Meng also varied the level of reciprocal self-disclosure participants would experience during their 20-minute sessions. Some chatbots shared their own experiences as way to evoke empathy. Other chatbots simply expounded on their own personal problems at the expense of not validating the participants’.

With the exception of the different reciprocal self-disclosure scenarios, the content and flow of conversations were scripted exactly the same for chatbots and for the perceived human chat partners. Chatbots asked participants to identify stressors. They asked how participants felt. They probed why participants thought stressors made them feel certain ways. Then chatbots shared their own experiences.

“They were programmed to validate and help participants get through stressful situations,” she said. “Our goal was to see how effective the messaging could be.”

Taking care

Meng discovered that whether talking to a chatbot or a human, a participant had to feel the partner is supportive or caring. If that condition is met, the conversation is successful in reducing stress.

Her study also revealed that regardless of the message, participants felt humans were more caring and supportive than a chatbot.

Her scenarios on reciprocal self-disclosure told another story. Human partners who self-disclosed—regardless of whether their intent was to be empathic or to just to elaborate on their own problems—contributed to stress reduction. But chatbots who self-disclosed without offering emotional support did little to reduce a participant’s stress—even less than chatbots that didn’t say anything at all.

“Humans are simply more relatable,” Meng said. “When we talk with another human, even when they don’t validate our emotions, we can more naturally relate. Chatbots, though, have to be more explicit and send higher-quality messages. Otherwise, self-disclosure can be annoying and off-putting.”

Perceiving the source

Meng conducted and analyzed research with Yue (Nancy) Dai, a 2018 alumna of MSU’s Communication doctoral program and professor at the City University of Hong Kong. Their findings were published in the Journal of Computer-Mediated Communication.

Meng said the study underscores that chatbots used in mental health apps work best when perceived as a truly caring source. She plans to follow-up the study with additional research that examines how messaging can be designed to up the caring factor.

Mental health apps, she said, aren’t going away, and in fact, are increasing in use and availability. While the majority of people have access to a mobile phone, many don’t have ready access to a therapist or health insurance. Apps, she said, can help individuals manage particular situations, and can provide benchmarks for additional supportive care.

“By no means will these apps and chatbots replace a human,” she said. “We believe that the hybrid model of AI chatbots and a human therapist will be very promising.”

By Ann Kammerer